beginning stereo buffs get impressed by speakers and amplifiers, by wattage and weight. but any true audiophile will focus first upon source materials and components.

there is a reason that people will pay up for vinyl masters and turntables with prices like motorcycles and that reason is simple: once you have injected error into a signal, you cannot take it back out.

you lose fidelity. whatever error you pick up gets amplified through the system, pre-amp, amp, etc. a digital signal, no matter how clean, is not sound. you need a DAC to make it analog again. no speakers no matter how good can fix or replace what you lost. you are now in the realm of estimation and approximation. bad speakers or bad ears may forgive such failings, but the best ones will only serve to reveal them more sharply.

and so it is also with technocratic government: the data is the debate, and it’s the part of the debate that we are losing most badly because so few pay any attention to it.

the public punditry rages around policy and “decision makers” but what informs these people? the data. and who generates that data? who knows? you never hear their names or numbers called, but they are producing the “facts” that feed and inform all else.

who decided to define covid deaths as “any death with a positive PCR test at a 40 Ct” or even a death within 30 days of such a positive test?

who decided to count as a covid case any positive test result using a monstrously over-sensitive diagnostic underpinned by a regime of mass testing of the asymptomatic never before undertaken in human history?

this did not come out of nowhere. someone made these choices and determined what the “data” was going to be for this whole affair. and that person pivoted the whole world upon the fulcrum of this seemingly small technical choice.

does anyone have the slightest idea who it was or how these dodgy definitions came to dominate so much of the world so suddenly and in such stark departure from all past practice and from basic sense?

how different might the last 3 years have been had these countings been performed in the manner in which things like flu are traditionally counted?

THAT is the power of the denizens of data definition and the talliers of tables.

in a society as beset by regulators, experts, and agencies as our own, it has come to constitute a form of commanding heights in the war over the perception of consensus reality.

and this has become deeply dangerous as the folks producing data are not honest brokers nor interested in truth. most are incentivized to push narratives and crisis because it’s good for business. it expands agencies, keeps the grant money flowing, and guarantees sweet sinecures “in industry” later. it does not need to be a conspiracy (though i’m sure some try). it’s just an emergent phenomenon based on the self-interest and self-selection of the cogs of this machine.

pretty much no one goes into “temperature data study” because they are fascinated by thermometers. and once they have the jobs, their views on “activism” slant and inform their methods and choices especially because crisis is the path to prosperity.

words you will never hear:

“we the experts and technocrats empaneled to study this crisis have determined that it is, in fact, not a crisis. please accept the return of the remainder or our budget and put it to some other use.”

covid has been an explosion of bad data and data suppression. i wrote the other day about the ONS seeming to suppress data every time the series go against their preferred narratives and highlighting some concerns about the nature of their data’s quality that had been raised by gatopals™ martin neil and norman fenton who have done such great work here. i spoke also about the unsatisfying explanations the ONS was putting forward regarding this curtailment.

then we get this which you’re honestly going to have to read to believe.

they have outright admitted that their widely used data is not fit for purpose and should not be used to impute vaccine efficacy.

they were apparently concerned enough about this data to stop reporting it in may 2022, but it is only 8 months later after who knows how many people have relied upon it and its badly dated reliance upon 2011 census to generate and support policies and personal choices.

and this is what i mean by “democracy dies in data adulteration.”

how is anyone to operate in such a system? we the people are getting tainted water straight from the well and so are those elected to govern. not even the devolution of rights to the individual promised by a republic can protect you from this because where are you going to get facts?

we’ve quite literally outsourced the definition of reality to a small group of technocrats in non-descript offices whose names we’ll never know and who self selected for issue advocacy and whose interests likely diverge severely from those of we the people.

they are the ones who establish the baseline of “what is going on.”

increasingly, you’re living in virtual reality and don’t know it.

and this issue is endemic.

the CDC has been just awful.

they are playing games with data, failed to do their job as required on monitoring adverse events, only provided data under duress of FOIA and lawsuit, and continue to play down issues and play hide the ball to this day.

were it not for folks like aaron siri and ICAN endlessly suing the CDC and trying to pry this data from them, we’d probably have never seen any of this.

CDC counts of covid cases and deaths look to be outlandishly high because they used definitions and detection modalities that never made sense. even the NYT was dunking on them. and yet it seems an article of faith that covid killed a million americans. they reported case rates without reference to sample rate and mistook a massive rise in testing for a rise in covid. the issue was so severe it inverted the slope of the case curve.

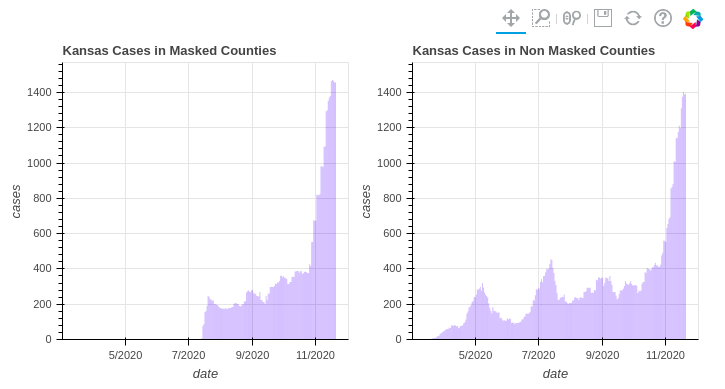

they used a narrow cherrypick of this data in kansas to claim that “masks worked” and published it knowing full well it had been invalidated already.

if it were not for “selective disclosure” it would seem that the CDC would provide no disclosure at all…

we’re living in governance by funhouse mirror.

and if you think this is bad you should see what’s going on with climate science where the data is of such low quality, so adulterated, and so deliberately misrepresented that it takes 2 weeks just to really explain to someone how to read it critically. and yet this “data” produced by a bunch of people who you have never heard of is being amplified through a zillion watt PA system to not only justify wildly aggressive and ill conceived global energy policy, but to whip up public fervor and endlessly indoctrinate all of public education.

these people have been caught cheating and hiding the data so many times as to disqualify them from ever being trusted again. the “hockey stick” used by al gore and the IPCC in AR4 was a complete fraud. the math was so bad that it created hockey stick shapes from random number strings.

and the issues go all the way to the base data.

the US temperature system run by the NOAA has one-sided warming slanted error rates that far exceed the century scale signal they seek to measure. the system as a whole almost certainly has a > 2 degrees C warming bias from bad siting near heat sources, increased urbanization, and a reduction in sampling sites in rural areas.

and just like covid, they did not even know it because they steadfastly refused to look. they were not tracking the issue or making any real effort to assess whether their weather stations met their own siting guidelines. and again, just like covid, this was handled not by the state or the “experts” but by a few motivated individuals with help from a citizen army.

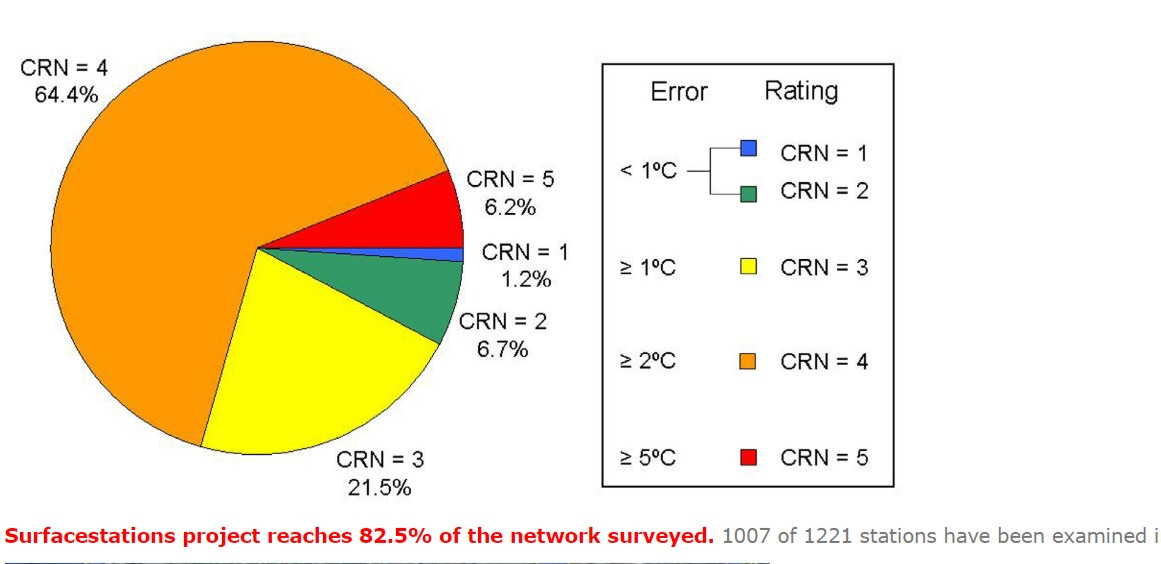

anthony watts and his “surface stations project” went out and performed the survey on their own using infra-red cameras and measuring tapes. they documented it meticulously. it’s all available on their website linked above. this was a truly wonderful project and run to the highest of standards. the key takeaway wound up being this:

fewer than 8% of the CRN network’s stations meet its own (already quite lax) siting guidelines. over 70% have a warming bias in excess of 2 degrees C. this signal is so tainted that there is no way to get back at any sort of truth state. you’re trying to track an alleged temperature signal in the 0.1 degree per decade range using a system with an error rate that’s likely 20-40 times that.

the NOAA has done nothing about this. they are not adjusting temps down to try to compensate. they are adjusting them up and literally going back and reducing past temperatures to make the uptrends look steeper.

because that’s good for budgets and grant grabbing.

take a look at how GISS altered the data from the 1930’s when they “adjusted” the data in the late 90’s.

an agency should have its purview rescinded for getting caught on something like this.

instead they got a firehose of funding.

crisis is cashflow.

this is made all the more galling by the fact that we know for 100% certain that the NOAA knows better.

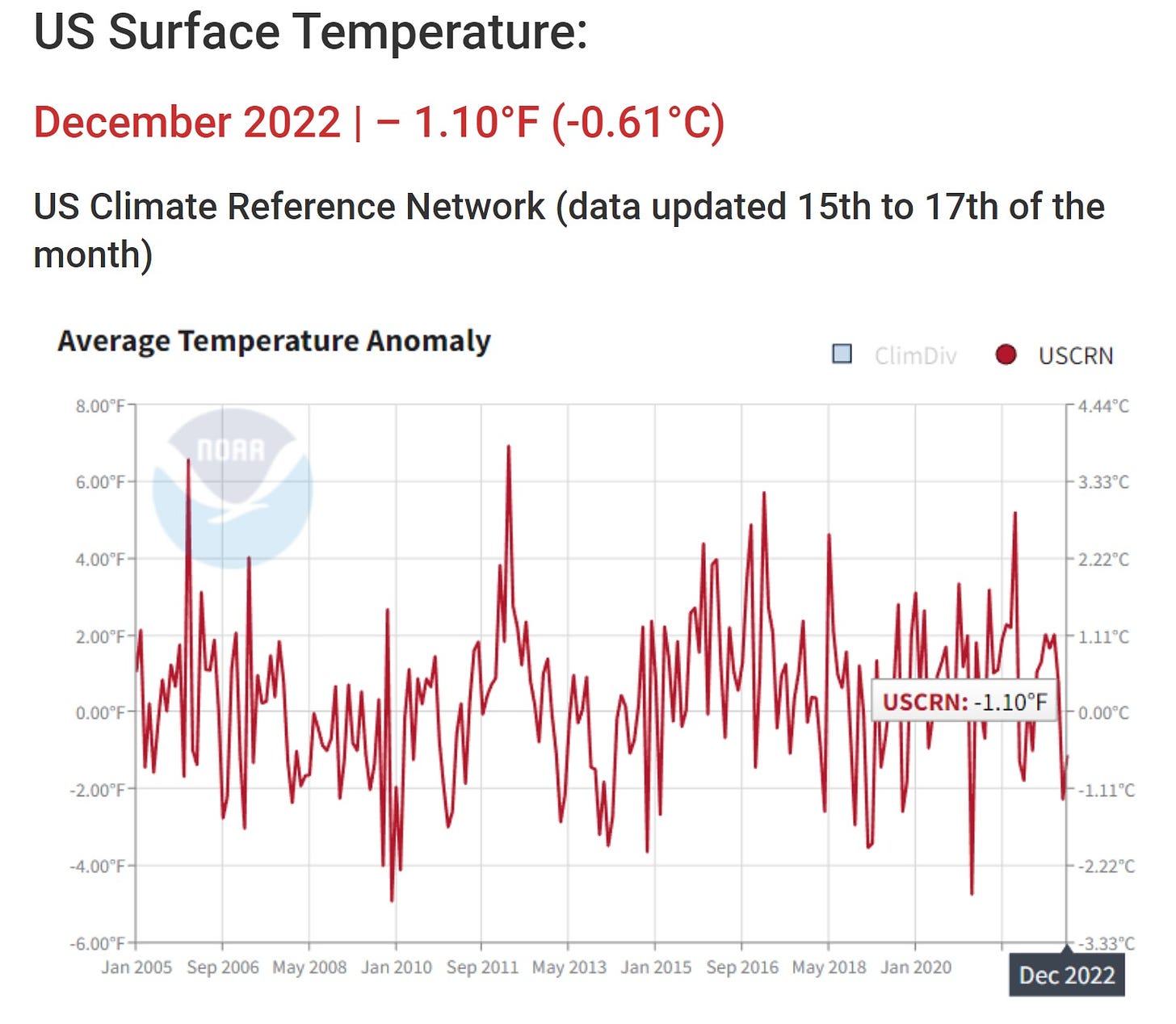

they have a network called the CRN reference network that is ONLY the well sited weather stations in continuous operation and covers the whole CONUS quite evenly. it only goes back 17 years, but it’s the best, clearest signal they have. yet it is rarely reported and they provide no data from it in easy to use forms nor any graphs of trends.

perhaps the reason is that when you graph it, it looks like this: (source, anthony watts)

not exactly “start eating bugs or we’re all going to die in 5 years” territory, is it?

and yet this “pure signal” is consigned to academic appendices and the music constantly blared from the speakers is the songs with the most distortion.

how is one to trust the agencies that make such choices?

the entire edifice of the regulatory and “scientific” state has been arrayed against we the people.

democracy has drowned in data adulteration and no one can can trust ANY of this weaponized GIGO garble masquerading as “the science.”

it’s tainted all the way back the the basic sources and it’s time we fixed this.

demand full and open access to ALL data. public agencies do not serve the state, their own agendas, nor the preferences of their patrons: they serve the public.

it is not their data. it’s ours. we paid this piper and it’s high time he produced the sheet music.

toxic technocracy is the implacable enemy of human agency and thriving.

in this information age of instant on analysis and peer review by 100’s or 1000’s of actual experts crushing cloisters and opening up the grand bazaar of debate, discourse, and adversarial review that ought be the marrow of scientific endeavor, there is ZERO excuse for “hide the ball.”

every public agency purporting to produce “data” must be open to public audit and every datum and dataset available for analysis and validation right down to the gradations on the rulers.

until that time, there is simply no basis for trust.

these organizations have disgraced themselves and been caught cheating and suppressing so many times up to an including manipulating media and social media to stifle and censor dissent that one would truly have to be a special kind of stupid to be willing to play another game of “close your eyes and open your mouth” over the fate of the world with them.

to these agencies, my admonition is simple:

with authority comes accountability.

if you’re so sure you’re right, let us check your work.

accept REAL peer review, not the cloistered clubhouse of confirmation bias you’re currently running.

instead of making the fentons and neils and siris and watt’s fight you tooth and claw for data scraps or do your jobs for you:

become full and freely transparent or accept that the base state for belief in you evermore shall be

Economic data is highly suspect as well. Massive Tech and finance layoffs happening, yet we are supposed to believe it’s the lowest unemployment ever? Eggs are $10 per dozen in some cities, yet we are supposed to believe inflation is coming down?

We had dinner with liberal friends last night. I have no family so I am loath to cut anyone out of my life at this point - but…..

They have every Covid shot that has come out, they still got Covid but proudly bragged on taking Paxstupid and then asked us if we had taken the latest ‘bi-valent (nightmare) shot’. When we said ‘no, and given we have had Covid and survived quite nicely - we aren’t taking another, not even a Flu shot’. After sputtering several statements masquerading as questions about ‘the science’ and not liking our simple refutations, we got: ‘well, then wallow in your ignorance!’.

And you as well my friends.

PS: we did not bring this topic up. They did through telling us about how a major gathering they sponsor had provided masks (occurred September 2022) and no one would wear them. They had to spend hours of volunteer time picking them up off the streets and in the venue plus - the waste of money.

Ahem: there’s your sign.